On the morning of March 25, 2026, memory chip stocks opened sharply lower. Micron fell 9.88% by close. SanDisk dropped 7.04%. Samsung lost nearly 5% in Seoul. SK Hynix shed 6%. The combined market cap loss ran into tens of billions of dollars, and the trigger was not a supply chain disruption or a regulatory ruling.

It was a blog post from Google Research.

The internet immediately compared it to Pied Piper, the fictional compression startup from HBO’s Silicon Valley. Cloudflare’s CEO Matthew Prince called it “Google’s DeepSeek moment.” The researchers who built it called it TurboQuant.

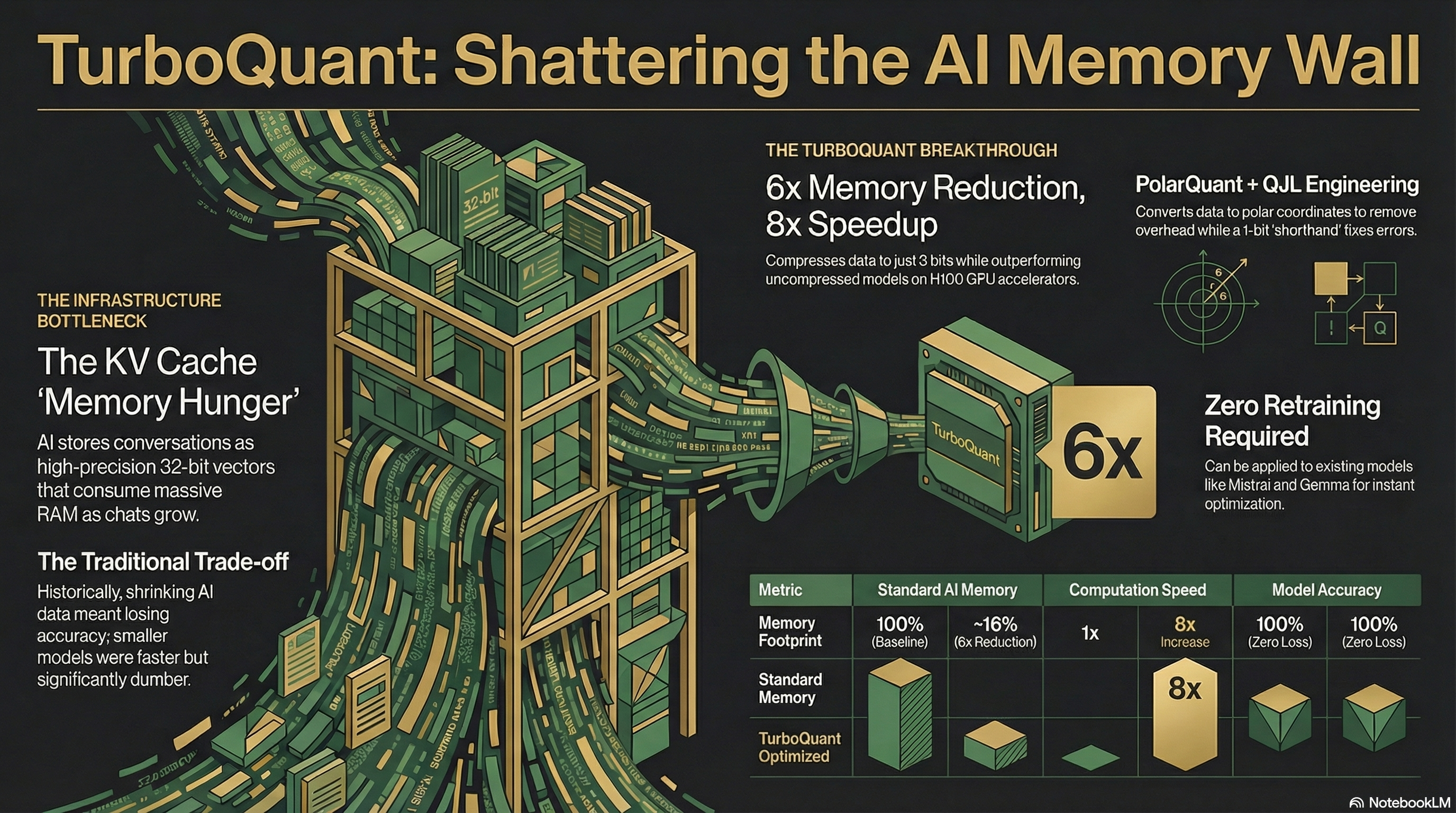

The problem nobody talked about enough

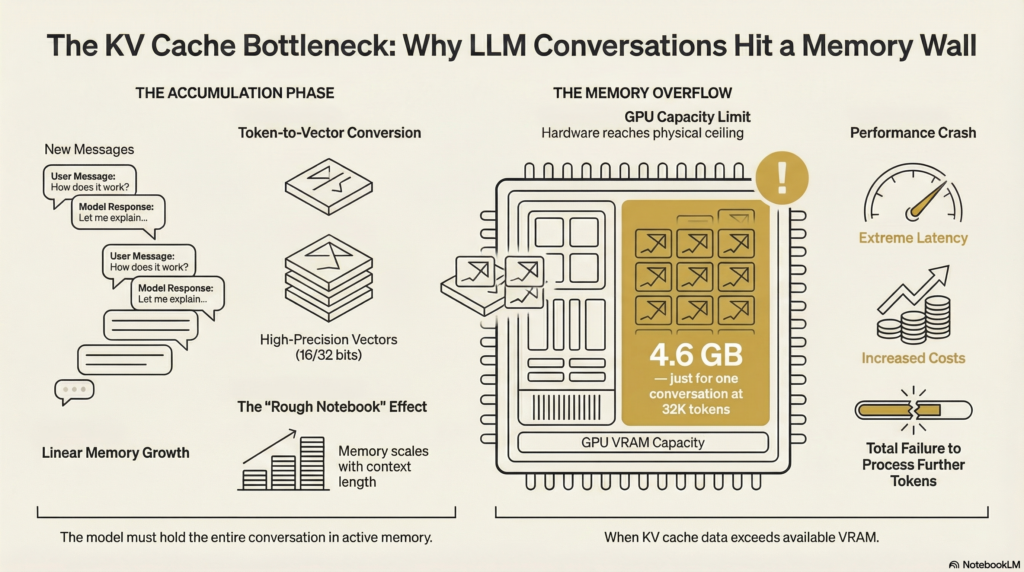

Every time a large language model generates a response, it computes something called a key-value cache. Think of it as the model’s working memory for a conversation. Each token you send, and each one the model has already processed, gets stored there so the model does not have to recompute everything from scratch on every new word it generates.

The math is unforgiving. For an 8B parameter model running at 32,000 tokens of context, the KV cache alone consumes around 4.6 GB of VRAM. That is before model weights, before any computation. Just the memory of the conversation. Scale that to multiple concurrent users, or to the context windows now measured in hundreds of thousands of tokens, and you run out of hardware fast.

Existing solutions either do not compress aggressively enough or introduce accuracy trade-offs that are hard to predict. Engineers building AI products have largely accepted this as a fixed cost, paying for more RAM, buying more H100s, keeping context windows shorter than they want. TurboQuant is a direct answer to that.

How it works

The algorithm was built by Amir Zandieh, a research scientist at Google, and Vahab Mirrokni, a VP and Google Fellow, with collaborators at New York University. It runs in two stages.

The first uses a technique called PolarQuant. Standard quantization methods store vectors in Cartesian coordinates (X, Y, Z values) and need to store extra “normalization constants” alongside each data block to decompress it correctly. Those constants add overhead, sometimes 1 to 2 bits per value, which can cancel out most of the compression gain.

PolarQuant sidesteps this entirely. It first applies a random rotation to the vectors. After rotation, the coordinates follow a predictable statistical distribution that is known in advance. Because the shape of the data is now predictable, the system no longer needs to store per-block normalization constants. It then converts coordinates into polar form, radius and angle, and applies an optimal quantizer to each component independently. The result is high-quality compression with zero metadata overhead.

The second stage handles what PolarQuant leaves behind. Any quantization introduces a small amount of error. In the attention mechanism, where the model decides which parts of a prompt matter most, even small systematic biases in those error terms accumulate across tokens and degrade output.

This is where QJL (Quantized Johnson-Lindenstrauss) comes in. QJL takes the residual error left by PolarQuant and compresses it to a single sign bit per dimension, either +1 or -1. A single bit sounds trivial, but the mathematics preserves the essential angular relationships between data points, producing an unbiased estimator for the attention calculation. The model’s reasoning process stays statistically equivalent to what it would have been at full precision.

PolarQuant handles the heavy compression. QJL corrects the bias. Together, the effective bit-width across both stages works out to approximately 3.5 bits per value, compressed from 16.

What the benchmarks show

Google tested TurboQuant on Gemma and Mistral across five long-context benchmarks: LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval.

At 3-bit quantization, TurboQuant achieved at least a 6x reduction in KV cache memory versus standard FP16 storage. On Needle In A Haystack, which asks a model to retrieve a single sentence buried inside 100,000 words of text, TurboQuant matched uncompressed models with perfect recall. On the LongBench suite, covering question answering, code generation, and summarization, it matched or outperformed the KIVI baseline across every task.

On NVIDIA H100 GPUs, 4-bit TurboQuant delivered up to 8x speedup in attention logit computation compared to 32-bit unquantized keys.

The method requires no retraining and no calibration data. It is entirely data-oblivious. You do not need to fine-tune an existing model before applying it. You insert it as a drop-in layer on KV cache read and write operations, and it works.

Independent developers had community implementations running on GitHub within 24 hours of the announcement. On NVIDIA’s DGX Spark hardware, one community experiment reported 13 to 21% faster inference than FP8 with identical accuracy. On Apple Silicon via MLX, 100% exact match was reported across context lengths from 8,500 to 64,000 tokens.

What it does not do

TurboQuant only targets inference. Training large models still requires enormous amounts of high-bandwidth memory, and this research does not change that.

There are also model-size caveats worth knowing. At 4-bit, quality on models above 3B parameters is effectively indistinguishable from FP16. At 3-bit, quality starts degrading noticeably on models smaller than 8B. Models below 3B parameters need careful testing before applying aggressive compression.

The official open-source release is expected in Q2 2026, likely timed around the paper’s formal presentation at ICLR 2026 in Rio de Janeiro, scheduled for late April. Community implementations are already live, but production deployments will largely wait for the official code.

What it means for AI builders

VentureBeat estimated TurboQuant could cut cloud inference costs by more than 50% for long-context workloads. Forrester principal analyst Biswajeet Mahapatra put it plainly in Infoworld: “Enterprises constrained by GPU memory rather than compute could run longer context windows on existing hardware, support higher concurrency per accelerator, or reduce total GPU spend for the same workload.”

The practical scenario that keeps coming up in analyst notes: a team spending $50,000 per month on GPU compute for LLM inference might achieve the same throughput for under $10,000 once TurboQuant reaches production infrastructure.

For agentic AI systems, where long context windows are a hard requirement, not a nice-to-have, this directly changes what hardware budgets are needed. The same GPU cluster that today serves 100 concurrent long-context sessions could serve 600. Or you could run a model six times larger on the same hardware.

The algorithm also applies to vector search and RAG pipelines, not just raw LLM inference. For any system that retrieves information from large embedding indices, TurboQuant’s compression improves recall while cutting the memory footprint of the index.

Why the chip market reacted the way it did

Micron had just reported blockbuster earnings on March 18, with revenue up 196% year-over-year to $23.9 billion and non-GAAP EPS of $12.20 against an $8.80 analyst estimate. The stock had surged more than 250% over the previous year on AI memory demand. TurboQuant complicated the story.

The core fear is straightforward. Memory chip companies had been pricing in the assumption that AI memory demand would scale linearly with model size and context length. If a software algorithm can compress that demand by 6x, the growth trajectory that justified their valuations needs recalculating.

Analyst reactions were split. Wells Fargo’s Andrew Rocha called TurboQuant a direct attack on the memory cost curve, then stopped short of a bearish conclusion because broad adoption has not happened yet. Morgan Stanley argued the market reaction was excessive and that cheaper inference would ultimately drive more AI deployments, raising total memory demand over time. TrendForce, the memory market research firm, predicted TurboQuant would accelerate adoption of long-context applications and drive structural growth in high-bandwidth memory, not reduce it.

The Register noted the chip declines were also partly driven by helium supply chain disruptions from the Middle East conflict, making it difficult to attribute the full sell-off to TurboQuant alone. The actual picture is messier than the headline numbers suggest.

What is clear is that TurboQuant introduces a credible software pathway to lower memory intensity. Whether that path reduces total memory demand or triggers Jevons paradox dynamics, where more efficient resources get consumed in greater volume, is what the market is still working out.

Why the theoretical grounding matters

Most LLM optimization papers show strong benchmark numbers that do not hold up in production. TurboQuant is different in a specific way. The paper proves it operates within a factor of approximately 2.7 of the Shannon information-theoretic lower bound for vector quantization. That means it is approaching the mathematical ceiling of what is achievable without losing information. The efficiency guarantees are not empirical claims based on test sets. They are provable results.

That rigor is what distinguishes it from a good benchmark paper. It also explains why independent implementations across different hardware platforms produced consistent results so quickly.

The formal presentation at ICLR 2026 will be followed by scrutiny from the broader research community. Integration into vLLM, Hugging Face’s inference stack, and llama.cpp will determine how quickly the efficiency gains reach deployed AI products. That timeline starts in Q2 2026.